Aside from that little stump, I enjoyed my weekend there very much! There were so many interesting and unique projects presented from all around the world.

These are some of the presentations that I especially liked:

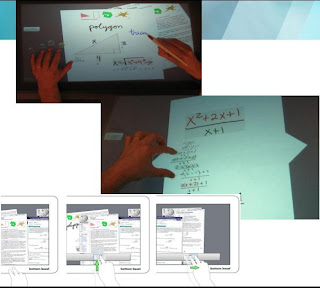

Hands-On Math: A page-based multi-touch and pen desktop for technical work and problem solving

I was very inspired by the different methods of data organization that this application used. The minimizing a portion of the paper by pinching/folding it is a very cool technique! It made me think about all the text that needed to be scrolled on the GnomeSurfer. If we can implement a similar technique, such as have portions of the gene sequence be minimized in the beginning, and allowing the option to expand them, that that would save users a lot of time and effort scrolling through the entire sequence. (And if we can come up with an awesome animation for the expansion/minimizing of information like they did in this project, then that'd be even cooler).

Their idea of implementing a pannable workspace is also very applicable to the GnomeSurfer (we actually had a very similar idea about providing additional workspace to users).

Another presentation that I really enjoyed was Chronicle: Capture, Exploration, and Playback of Document Workflow Histories. So basically this is a tool that records, annotates, and categorizes the different actions done on an image in an image processing software. It records brush strokes, layer creations, color adjustments, and any other type of interactions done on the image. Anyone using the program can just review the entire history process. They can even customize the playback so it'll focus on the actions of a specific tool or an unique area on the image.

As a below-average occasional user of Photoshop, this is exactly the type of tools that I need! Every time I try to replicate certain effects from online tutorials, I can never really capture the exact methodology used. So having a program that can play back actions on the images is just awesome.

Being able to review the process of object creation is such an important feature in most applications these days, it's almost an expected feature.

One of the poster and demo presentations gave me a lot of ideas regarding our superhero costume project. Pinstripe: eyes-free continuous input anywhere on interactive clothing does exactly what it says. The presenters sew sensors into the textile of the garment, which then enables wearers to apply different types of input. The differences in pressure and target area can all be detected and differentiated, which allows so much more freedom in terms of integrating different types of interaction with the garment.The entire time that I was listening to their presentation, I thought to myself: instead of using touch sensors on our Chameleon Suit, which only allows binary inputs, why can't we implement something similar to this technology? Then when the wearer of the Chameleon Suit doesn't even have to wear multiple sensors because the pinstripes can detect all the necessary types of input.

Overall I saw some amazing projects and ideas during this conference, and I definitely took away a lot of useful knowledge from it. It was a very fun weekend!

No comments:

Post a Comment